- Have any questions?

- [email protected]

How to Measure Test Results

Testing Segmentation For A/B Test

November 3, 2023

The Power of Online Review and Rating with F&B Business

November 4, 2023Interpreting and tracking results is the most important step of the entire A/B testing process, as this is where marketers discover where they need to make changes in order to make their campaigns more effective. Optimizely recommends assessing results in terms of added value. This means that even just a small percentage increase in conversion rate could mean a major difference in revenue.

STATISTICAL SIGNIFICANCE

Once you have your results, determine whether a statistically significant difference exists between your two versions. The process of gaining validity is hypothesis testing, but the actual validity we seek is called statistical significance. Statistical significance refers to setting up a confidence level—how sure are we that the results we’re getting from an A/B test are accurate?

Instead of performing the significance tests yourself, you can use an online A/B testing significance calculator. Websites such as Optimizely and KISSmetrics offer up these handy tools for free. And it’s never a bad idea to use two different calculators to double-check your results.

NEGATIVE RESULTS

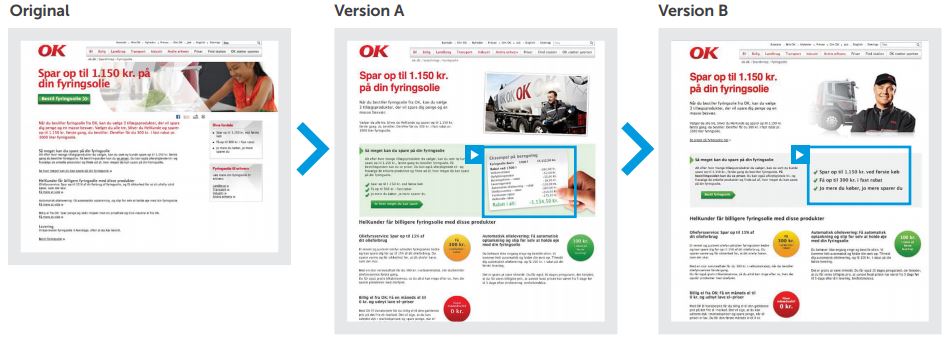

Marketers also should remember that even negative and neutral results can be helpful for better understanding customers. For example, OK A.M.B.A., a Scandinavian oil and energy company, produced a negative result in their A/B test, but later turned that into a win—an increase in conversions by almost 50%!

How’d they do that? They wanted to change a particular landing page in order to drum up conversions through this page alone. This page was copy heavy and lacked visuals, so they set out to change this factor. The variant in the first test included an additional image to the page. However, this image showed a breakdown of the calculation of an offer. Doing this led to 30% fewer conversions! The company had thought that customers would want a better understanding of the calculation, but apparently this was the wrong assumption.

The hypothesis was invalidated. So, they tested again, but this time, they changed the image to that of a checklist of what the customer would get in the offer, and this is when conversions skyrocketed by nearly 50%. The take-away here is that customers may surprise you, completely throwing you off and negate your hypothesis. That’s OK. The important thing is to learn from the test—learn more about your customers’ preferences, what attracts them and what makes them tick. Then, based on this information, test again and again until you eventually uncover what actually makes conversions go through the roof. Just think of A/B testing as a long, but accurate opportunity for learning.

Here are some key take-aways for the savvy marketer:

- There’s no time like the present—start testing now!

- Don’t assume you know what your audience is thinking—test to confirm your instincts.

- Listen to and take action based on what you learn from testing—A/B testing is your vehicle to optimizing your marketing efforts.

Clearly, A/B testing is an ongoing process and not a one-time-only event. People, trends, and preferences change over time, and marketers will have to respond accordingly. The best way to stay at the top of your game is to consistently test elements across all your campaigns so that you don’t neglect any opportunities for bringing in sales and conversions.

A/B testing is revolutionizing marketing because results are real, and data is immediately applicable for making changes to campaigns.

The beauty of the A/B test is that the possibilities are endless. Marketers can always learn something new and continue to improve their marketing efforts. It simply takes perseverance, creative thinking, and swift automation. Don’t guess. Test!